Virtual Reality : The Sight

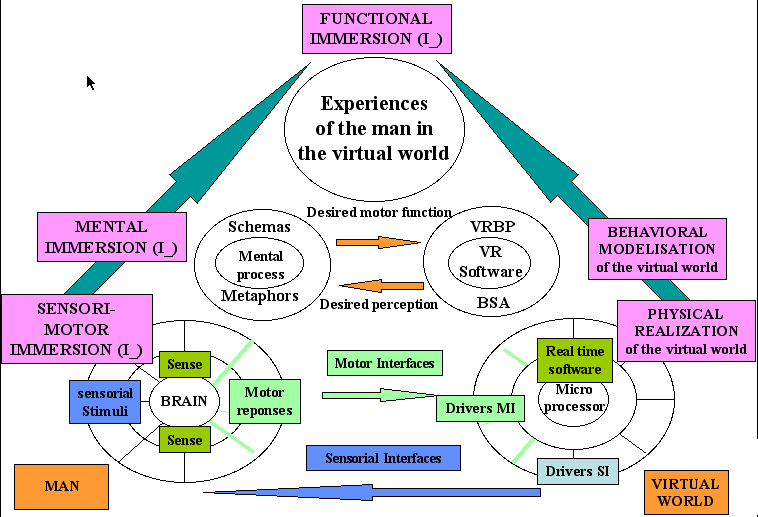

Technocentric Designer Diagram

Our Visual System

Extract from http://cs.wpi.edu/~matt/courses/cs563/talks/brian1.html by Brian Lingard

We obtain most of our knowledge of the world around us

through our eyes. Our visual system processes information in two distinct ways

-- conscious and preconscious processing. When we are looking at a photograph,

or reading a book or map requires conscious visual processing and hence usually

requires some learned skill. Preconscious visual processing, however, describes

our basic ability to perceive light, color, form, depth and movement. Such processing

is more autonomous, and we are less aware that it is happening.

Physically our eyes are fairly complicated organs. Specialized cells form structures

which perform several functions -- the pupil acts as the aperture where muscles

control how much light passes, the crystalline lens performs focusing of light

by using muscles to change it's shape, and the retina is the workhorse converting

light into electrical impulses for processing by our brains. Our brain performs

visual processing by breaking down the neural information into smaller chunks

and passing it thoguh several filter neurons. Some of these neurons detect only

drastic changes in color, others neurons detect only vertical edges or horizontal

edges.

Depth information is conveyed in many different ways. Static depth cues include

interposition, brightness, size, linear perspective, and texture gradients.

Motion depth cues come from the effect of motion parallax, where objects which

are closer to the viewer appear to move more rapidly against the background

when the head is moved back and forth. Physiological depth cues convey information

in two distinct ways -- accommodation, which is how our eyes change their shape

when focusing on distant objects, and convergence, which is a measurement of

how far our eyes must turn inward when looking at objects closer than 20 feet.

We obtain stereoscopic cues by extracting relevant depth information by comparing

the left and right views coming each of our eyes.

Our sense of visual immersion in VR comes from several factors which include

field of view, frame refresh rate, and eye tracking. Limited field of view can

result in a tunnel vision feeling. Frame refresh rates must be high enough to

allow our eyes to blend together the individual frames into the illusion of

motion and limit the sense of latency between movements of the head and body

and regeneration of the scene. Eye tracking can solve the problem of someone

not looking where their head is oriented. Eye tracking can also help to reduce

computational load when rendering frames, since we could render in high resolution

only where the eyes are looking.

The sense of virtual immersion is usually achieved via some means of position

and orientation tracking. The most common means of tracking include optic, ultrasonic,

electromagnetic, and mechanical. All of these means have been used on various

head mouted display (HMD) devices. HMDs come in three basic varieties including

stereoscopic, monocular, and head coupled. The earliest stereoscopic HMD was

Ivan Sutherland's Sword of Damocles, which was built in 1968 while he was a

student at Harvard. It got its name from the large mechanical position sensing

arm which hung frm the ceiling and made the device ungainly to wear. NASA has

built several HMDs, chiefly using LCD displays which had poor resolution. The

University of North Carolina has also built several HMDs using such items as

LCD screens, magnifying optics and bicycle helmets. VPL Research's EyePhone

series were the first commercial HMDs. A good example of a monocular HMDs is

the Private Eye by Reflection Technologies of Waltham MA. This unit is just

1.2 x 1.3 x 3.5 inches and is suspended by a lightweight headband in front of

one eye. The wearer sees a 12-inch monitor floating in mid air about 2 feet

in front of them. The BOOM is head coupled HMD and was developed at NASA's Ames

Research Center. The BOOM uses two 2.5 inch CRTs mounted within a small black

box that has two hand grips on each side and is attached to end of articulated

counter-balanced arms serving as position sensing.